ChatGPT allegedly shared users’ query topics, user IDs, and email addresses with Google and Meta, new class action lawsuit claims

Millions of people use ChatGPT to ask questions they would never post publicly. They ask about depression, debt, marriage problems, medical symptoms, legal trouble, and career fears. A new lawsuit filed in federal court alleges that some of those conversations may have been quietly funneled into the advertising systems of Meta and Google through tracking technology embedded in ChatGPT’s website.

The proposed class action, filed Tuesday in the U.S. District Court for the Southern District of California, accuses OpenAI of secretly transmitting users’ chat query topics and identifying data to Meta and Google without consent. The complaint alleges OpenAI embedded Meta’s Facebook Pixel and Google Analytics code into ChatGPT.com, allowing user activity to be intercepted and forwarded in real time.

The case, Couture v. OpenAI Global, LLC (Case No. 3:26-cv-03000), was brought by California resident Amargo Couture on behalf of all U.S. residents who entered queries into ChatGPT, along with a California subclass.

“This is a class action lawsuit brought on behalf of all United States residents who have accessed and entered queries into ChatGPT.com (the “Website”), a website Defendant owns and operates,” the lawsuit read.

Why AI chat privacy is becoming a legal minefield

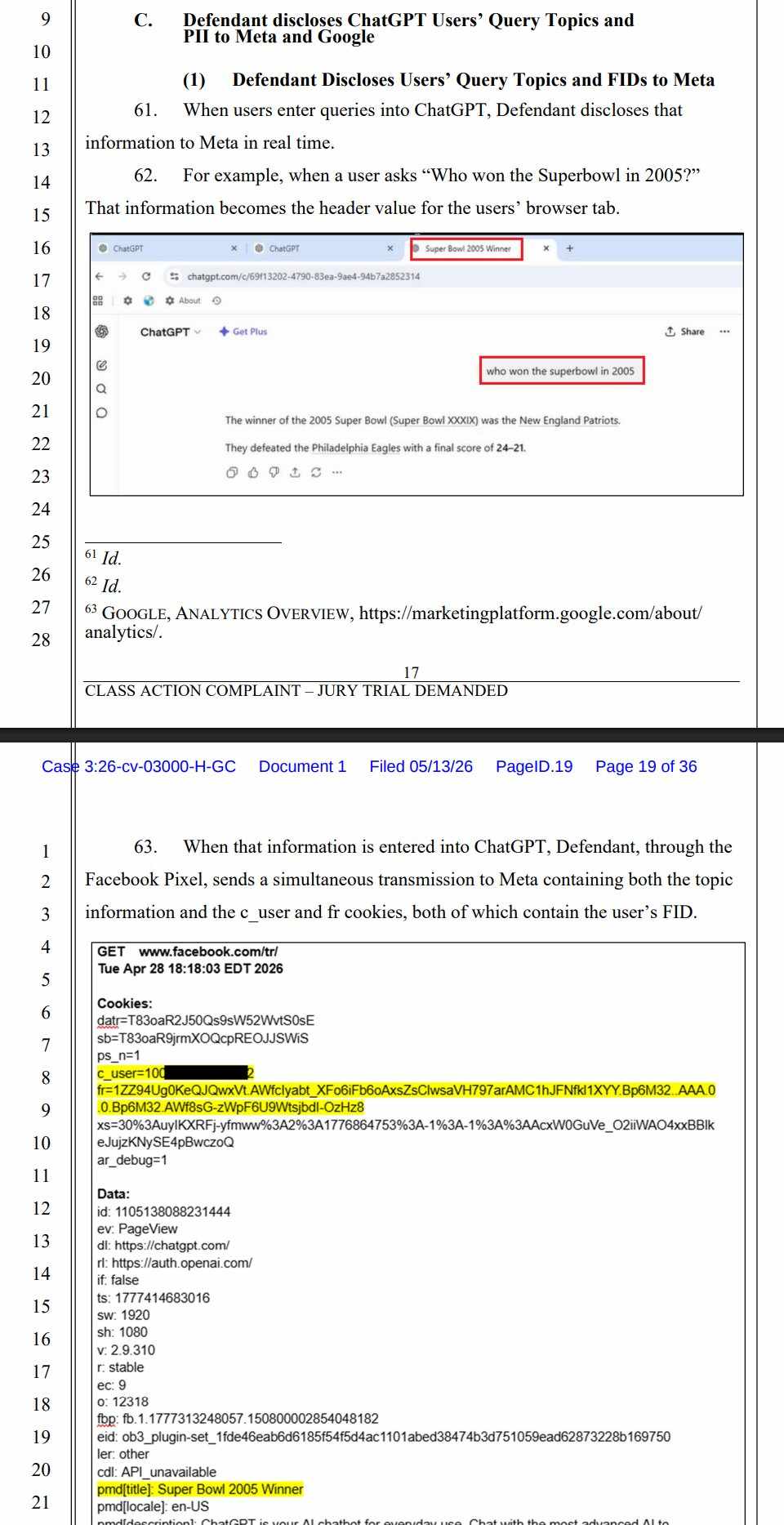

At the center of the lawsuit is a claim that ChatGPT’s browser tab titles dynamically reflect the topic of a user’s conversation, and that those titles were allegedly transmitted alongside identifying cookies connected to Facebook and Google accounts.

“Defendant owns and operates ChatGPT, an AI chatbot service designed to provide answers to almost any question a user asks, including queries regarding sensitive and personal topics like the user’s finances, health, and legal issues. So much information is put into ChatGPT that some sources estimate “The average company leaks confidential material to ChatGPT hundreds of times per week.”¹ The same is true of individuals, who increasingly rely on ChatGPT to gather information and advice to their most personal issues. As such, personal privacy on ChatGPT is an issue with broad implications for individuals’ control of their privacy and personal information,” the lawsuit added.

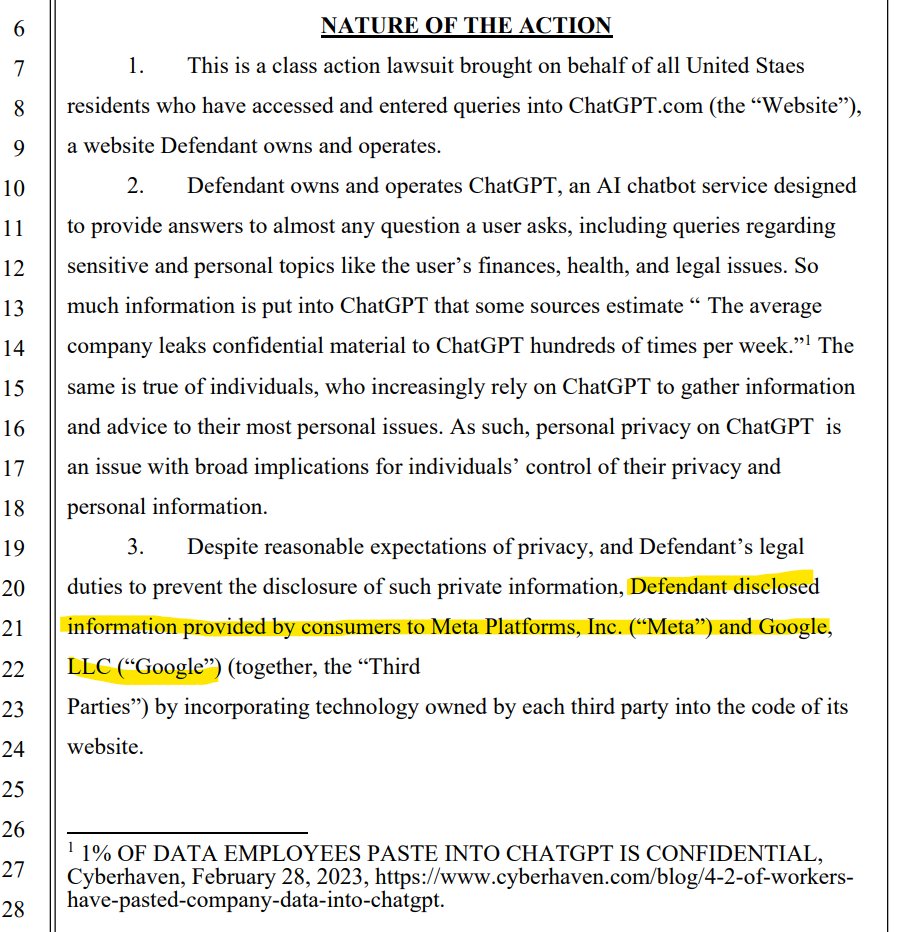

The complaint gives a simple example. If a user asks ChatGPT, “Who won the Super Bowl in 2005?” the browser tab updates to display that topic. According to the lawsuit, Meta Pixel and Google Analytics then transmit that information, along with identifiers tied to the user’s accounts, to external servers.

The filing claims Meta received Facebook cookies, including the unencrypted “c_user” identifier and “fr” cookie tied to a Facebook ID. Google allegedly received data tied to Google profile cookies such as “Secure-3PSID,” along with hashed login-related identifiers.

“Despite reasonable expectations of privacy, and Defendant’s legal duties to prevent the disclosure of such private information, Defendant disclosed information provided by consumers to Meta Platforms, Inc. (“Meta”) and Google, LLC (“Google”) (together, the “Third Parties”) by incorporating technology owned by each third party into the code of its website.”

The lawsuit describes the process as a “secret and contemporaneous” duplication of users’ communications carried out through JavaScript code embedded on the website.

“Meta’s embedded code, written in JavaScript, sends secret instructions back to the individual’s browser, without alerting the individual that this is happening,” the complaint states.

Similar allegations were made about Google Analytics.

Couture claims she used ChatGPT to ask sensitive questions involving health and finances while logged into Facebook and Google accounts in the same browser session. The lawsuit argues that this created an unreasonable invasion of privacy and exposed confidential communications to third-party advertising platforms.

The complaint alleges violations of the federal Electronic Communications Privacy Act, California’s Invasion of Privacy Act, and California’s constitutional privacy protections. The suit seeks class certification, injunctive relief, statutory damages, and other remedies. Under CIPA, statutory damages can reach $5,000 per violation without requiring proof of actual harm.

Couture v. OpenAI Global, LLC

The lawsuit comes amid a growing wave of privacy litigation targeting common website-tracking tools. Over the past few years, plaintiffs’ firms have filed thousands of lawsuits accusing companies of violating California wiretapping laws by using analytics scripts, pixels, chat widgets, and session-recording software.

Business groups and defense attorneys have pushed back aggressively against those claims, arguing that California’s 1967 wiretap law was never intended to regulate routine internet analytics and advertising infrastructure.

A nearly identical lawsuit was filed last month against AI search startup Perplexity, which was accused of transmitting user query information through similar tracking technologies.

OpenAI has not publicly responded to the allegations. The company’s privacy policy states that it may share information with vendors, service providers, and partners for analytics and business purposes. The lawsuit argues that users were never clearly informed that their chat topics and identifiers could be transmitted in real time to Meta and Google via embedded tracking code.

AI chatbots are becoming the internet’s newest privacy battleground

Privacy researchers say Facebook Pixel and Google Analytics are among the most widely used tracking tools on the internet. Millions of websites use them to measure traffic, conversions, ad performance, and user behavior. Critics have warned for years that those systems can expose sensitive browsing activity when improperly configured.

One cybersecurity analyst told Cybernews that the trackers are “not very privacy-friendly,” though the analyst added that users who agree to platform terms of service may have limited expectations of complete confidentiality online.

The case is still in its earliest stage. OpenAI has not yet filed a formal response, and the court has not ruled on whether the alleged transmissions qualify as unlawful interceptions under federal or California privacy law.

If the lawsuit survives early dismissal attempts and obtains class certification, the potential exposure could be massive. Tens of millions of Americans have used ChatGPT since its launch, many treating the AI chatbot less like a search engine and more like a personal advisor.

That shift is part of what makes this case different.

People increasingly use AI systems to discuss issues they would normally reserve for therapists, lawyers, doctors, or close family members. The lawsuit taps into a growing fear inside the AI era: users may trust these systems far more deeply than they trust traditional websites, even though many of the same advertising and analytics technologies still operate behind the scenes.

Couture v. OpenAI Global, LLC (Case No. 3:26-cv-03000)