25 Most Popular AI Terms Everyone Should Know in 2026

Artificial intelligence is everywhere, and it’s rewriting how the world works. Along with these massive shifts comes an entirely new vocabulary. Spend just a few minutes reading about AI, and you’ll run into LLMs, RAG, RLHF, and a dozen other terms that can make even seasoned tech professionals feel slightly lost.

Let’s be honest: the jargon can feel like a fortress of tech-speak.

The good news? You don’t need a PhD in computer science to understand the AI revolution. You just need to understand the language. From the buzzwords dominating boardroom meetings to the concepts powering your favorite apps, we’re breaking down the most popular AI terms in plain English. No confusing code. No academic jargon. Just clarity.

Consider this glossary your roadmap to the modern AI era. Because the AI field evolves almost daily, we regularly update this guide, making it a living document much like the AI systems it describes.

A year ago, terms like “AI agents,” “RAG,” “context engineering,” and “vibe coding” barely existed in mainstream conversation. Today, they dominate startup pitches, earnings calls, developer communities, job listings, and millions of posts across X.

Artificial intelligence hasn’t just introduced new technology. It has introduced an entirely new language.

From builders creating AI-powered apps to marketers adapting to AI search, people across industries are trying to keep up with a flood of new concepts, acronyms, and internet-native slang spreading at internet speed.

To build this list, we analyzed some of the most widely discussed AI terms trending across X, developer communities, enterprise AI discussions, and the broader tech industry in 2026.

Some of these terms explain how modern AI systems work. Others reflect the culture, hype, fears, business opportunities, and internet conversations surrounding artificial intelligence.

Whether you’re a founder, developer, investor, student, marketer, or simply curious about where technology is heading, these are the AI terms everyone is talking about right now.

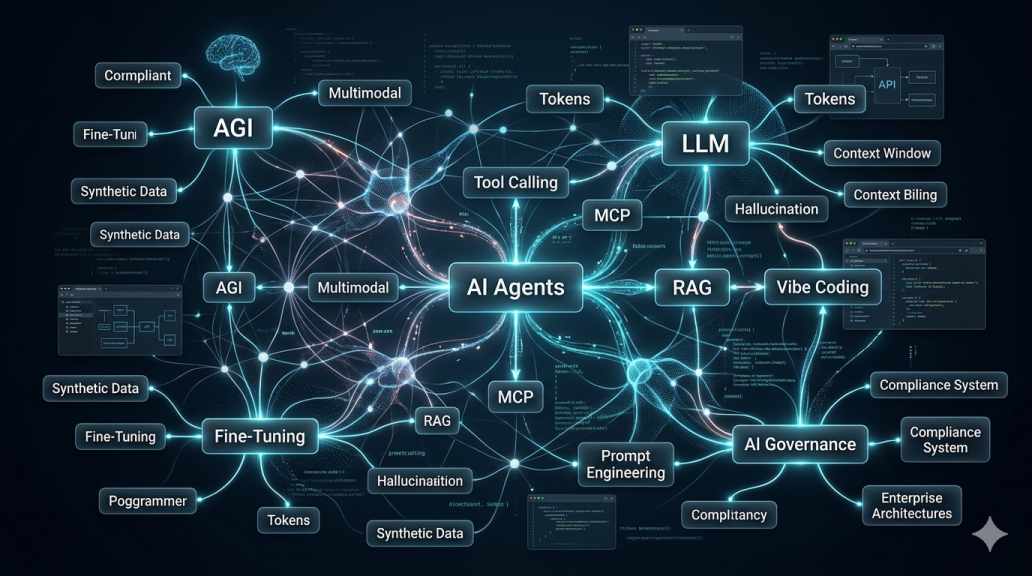

A visual map of the modern AI ecosystem. Image credit: Gemini.

LLM (Large Language Model)

What is an LLM?

LLM stands for Large Language Model, a type of AI system trained on massive amounts of text data to understand and generate human-like responses. Models like OpenAI’s GPT, Anthropic’s Claude, Google’s Gemini, and xAI’s Grok are all examples of LLMs.

These systems can write emails, summarize documents, answer questions, generate code, and hold conversations that often feel surprisingly human.

Why it matters

LLMs are the foundation of today’s AI boom. Nearly every major AI product people use today, from chatbots to coding assistants and AI search tools, is powered by some form of large language model.

The rise of LLMs has also triggered a global race for chips, data centers, energy infrastructure, and AI talent as companies compete to build smarter and larger models.

Why people are talking about it

LLMs dominate conversations across X because they sit at the center of the AI race. Every few weeks, a new model launches claiming better reasoning, faster coding, lower costs, or more human-like responses.

The competition between OpenAI, Google, Anthropic, Meta, and xAI has turned LLM development into one of the biggest technology battles in modern history.

AI Agents / Agentic Workflows

What are AI Agents?

AI agents are AI systems designed to perform tasks autonomously with limited human involvement. Instead of simply responding to prompts, agents can plan steps, make decisions, use software tools, browse the web, call APIs, write code, and complete multi-step workflows.

Agentic workflows refer to the process of chaining these actions together so AI can handle increasingly complex tasks.

Why it matters

Many people in Silicon Valley believe AI agents could become the next major software platform after SaaS.

Instead of humans manually clicking through apps, AI agents may eventually handle scheduling, research, customer support, coding, data analysis, and business operations automatically.

This shift could reshape how software is built and how people work.

Why people are talking about it

“AI agents” has become one of the hottest phrases on X as startups race to build autonomous AI workers and digital assistants.

Companies like OpenAI, Anthropic, Google, and Microsoft are all investing heavily in agentic systems, while developers constantly share demos of agents booking flights, building apps, managing tasks, and automating workflows.

RAG (Retrieval-Augmented Generation)

What is RAG in AI?

RAG stands for Retrieval-Augmented Generation, a technique that allows AI systems to pull information from external sources before generating answers.

Instead of relying only on what the model learned during training, RAG lets AI retrieve documents, databases, search results, or company knowledge in real time.

Why it matters

RAG helps reduce hallucinations and improves accuracy, especially in enterprise settings where current and reliable information matters.

It has become one of the most important building blocks for AI assistants used in healthcare, finance, legal services, customer support, and enterprise search.

Why people are talking about it

RAG exploded in popularity as businesses realized that general-purpose chatbots were not reliable enough for serious work.

On X, developers frequently discuss RAG pipelines, vector databases, embeddings, and enterprise AI systems connected to internal company data. It has become one of the most widely discussed techniques for building useful real-world AI applications.

AI Hallucination

What is an AI hallucination?

An AI hallucination occurs when an AI model generates false, misleading, or entirely fabricated information while sounding confident.

Examples include fake legal citations, invented facts, incorrect statistics, or fabricated sources.

Why it matters

Hallucinations remain one of the biggest weaknesses in modern AI systems.

In industries such as law, healthcare, finance, and education, inaccurate AI-generated information can pose serious risks. That’s one reason companies continue investing heavily in verification systems, RAG architectures, and AI safety research.

Why people are talking about it

The term became mainstream after users started sharing examples of AI systems confidently making things up.

Stories involving AI-generated fake court cases, inaccurate search summaries, and misleading chatbot responses helped push “hallucination” into public conversation far beyond the tech industry.

Vibe Coding

What is Vibe Coding?

Vibe coding is a popular AI slang term for building software by telling an AI what you want in plain English, rather than manually writing every line of code yourself.

The phrase was popularized by Andrej Karpathy in 2025 and quickly spread across developer communities on X.

Why it matters

Vibe coding represents a major shift in how software may be built in the AI era.

Instead of focusing solely on syntax and technical implementation, developers increasingly act as directors, guiding AI systems through ideas, iterations, and product design.

The trend is also to lower barriers for non-technical founders who want to build apps and tools without a traditional engineering background.

Why people are talking about it

Vibe coding became one of the most viral AI phrases on X as developers started sharing stories of building apps, games, and startups almost entirely through AI prompts.

The term is especially tied to AI coding tools from companies like Cursor, OpenAI, and Anthropic, which are changing how programmers write and ship software.

Generative AI (GenAI)

What is Generative AI?

Generative AI, often shortened to GenAI, refers to AI systems that can create new content such as text, images, videos, music, code, and audio.

Unlike traditional software that follows fixed rules, generative AI produces original outputs based on patterns learned from massive datasets.

Tools like OpenAI’s ChatGPT, Adobe’s Firefly, and Google’s Gemini are all examples of generative AI systems.

Why it matters

Generative AI kicked off one of the largest technology shifts since the rise of the internet and smartphones.

It is already reshaping industries, including software development, marketing, education, design, entertainment, customer support, and search.

The technology is also changing how companies think about productivity, automation, and creative work.

Why people are talking about it

“GenAI” became one of the defining business and technology buzzwords of the decade as companies rushed to integrate AI into products and workflows.

The phrase appears frequently in startup pitches, earnings calls, investor presentations, job listings, and enterprise software announcements on X and across the broader tech industry.

Prompt Engineering

What is Prompt Engineering?

Prompt engineering is the practice of designing clear and effective instructions for AI systems to produce better results.

Instead of simply asking AI random questions, prompt engineering involves structuring prompts carefully using context, examples, formatting rules, goals, and constraints.

Why it matters

The quality of an AI system’s output often depends heavily on the quality of the prompt.

Good prompts can dramatically improve coding accuracy, writing quality, research depth, reasoning, and workflow automation.

Prompt engineering has also become a valuable skill for developers, marketers, analysts, founders, and non-technical professionals using AI tools in daily work.

Why people are talking about it

Prompt engineering exploded on X during the early AI boom as users shared prompt tricks, workflows, and “prompt hacks” to get better results from tools like ChatGPT.

At the same time, many users began joking about “prompt fatigue” as prompts became increasingly long and complicated, especially for advanced AI workflows and agents.

AI Slop

What is AI Slop?

AI slop is a slang term for low-quality, mass-produced AI-generated content.

The phrase is commonly used for spammy articles, generic social posts, fake images, clickbait videos, and repetitive AI-generated material flooding the internet.

Why it matters

As AI tools become easier to use, the internet is being flooded with enormous amounts of cheap automated content.

This has raised concerns about misinformation, declining content quality, fake engagement, and the growing difficulty of separating authentic human work from machine-generated noise.

Why people are talking about it

“AI slop” became a viral insult across X as users criticized lazy AI-generated posts, fake AI art, low-effort SEO articles, and content farms pumping out automated content at scale.

The phrase is now widely used in debates about the future quality of the internet in the AI era.

Tokens and Context Window

What are Tokens and Context Windows?

Tokens are the small chunks of text AI models process instead of reading full words or sentences directly. A token can be a word, part of a word, punctuation, or even spaces.

A context window refers to the amount of information an AI model can process and “remember” during a conversation or task.

Why it matters

Context windows play a huge role in determining how useful an AI system is for coding, research, analysis, long conversations, and document processing.

Larger context windows allow AI models to handle books, lengthy codebases, spreadsheets, contracts, and complex workflows more effectively.

Why people are talking about it

Developers and AI users constantly compare token limits and context windows when evaluating models from OpenAI, Google, Anthropic, and Meta.

On X, discussions about “who has the biggest context window” have become a regular part of model-launch debates and benchmark wars.

Multimodal AI

What is Multimodal AI?

Multimodal AI refers to AI systems that can process multiple types of information simultaneously, including text, images, audio, video, and voice.

Instead of handling only one format, multimodal models can combine different inputs and outputs in a single interaction.

Why it matters

Multimodal AI is making AI systems feel more human and interactive.

It allows users to upload screenshots, analyze videos, speak naturally with AI assistants, generate images from text, and interact with AI across multiple communication channels simultaneously.

This is pushing AI beyond simple chatbots into more advanced digital assistants and creative tools.

Why people are talking about it

Multimodal AI has become one of the biggest themes in the AI race as companies compete to build systems that can see, hear, speak, and reason across different media types.

Products from Google, OpenAI, Meta, and xAI have fueled constant discussion on X around image generation, voice AI, video AI, and real-time assistants.

AGI (Artificial General Intelligence)

What is AGI?

AGI stands for Artificial General Intelligence, a theoretical form of AI capable of performing nearly any intellectual task a human can do.

Unlike today’s AI systems, which are usually specialized for specific tasks, AGI would be able to reason, learn, adapt, and solve problems across many domains without needing separate training for each.

Why it matters

Many researchers believe AGI could become the most transformative technology in human history.

Supporters argue it could accelerate scientific discovery, medicine, education, and productivity. Critics warn it could also create major economic disruption, security risks, and questions about human control.

The possibility of AGI is one reason governments and tech companies are investing hundreds of billions of dollars into AI infrastructure.

Why people are talking about it

AGI has become one of the most debated topics on X, especially as modern AI systems continue to improve faster than many experts expected.

Arguments over “when AGI arrives” have become a constant online battle among AI optimists, skeptics, researchers, founders, and safety advocates.

MCP (Model Context Protocol)

What is MCP?

MCP, short for Model Context Protocol, is an open standard that enables AI models to securely connect to external tools, applications, databases, and workflows.

Many developers describe it as a universal communication layer that allows AI systems to interact with software more reliably and consistently.

Why it matters

MCP could become a foundational building block for AI agents and enterprise AI systems.

Instead of every AI tool using its own custom integration method, MCP aims to create a shared standard for connecting models with calendars, CRMs, APIs, databases, coding tools, and other services.

Why people are talking about it

MCP exploded across developer communities on X after gaining support from Anthropic and the broader open-source ecosystem.

Many developers now view MCP as one of the most important technologies enabling real-world agentic AI systems.

GEO (Generative Engine Optimization)

What is GEO?

GEO stands for Generative Engine Optimization, the practice of optimizing content to make it more likely that AI systems will reference, summarize, or cite it in generated answers.

Instead of focusing only on traditional search engine rankings, GEO focuses on visibility inside AI-generated responses.

Why it matters

As more users turn to AI assistants rather than traditional search engines, companies are beginning to rethink how online visibility works.

The rise of GEO signals a broader shift from “ranking on Google” to “being included in AI-generated answers.”

Why people are talking about it

GEO became a major topic across X as publishers, marketers, founders, and SEO professionals realized that AI search may dramatically change web traffic patterns.

Many businesses are now trying to understand how to remain visible in an internet increasingly shaped by AI-generated responses.

Fine-Tuning

What is Fine-Tuning in AI?

Fine-tuning is the process of taking a pretrained AI model and training it further on specialized data to improve performance for a specific task or industry.

For example, a company might fine-tune a model using customer support conversations, legal documents, or medical records.

Why it matters

Fine-tuning allows organizations to build AI systems that are more accurate, specialized, and aligned with their own workflows.

It is one of the main reasons AI can be applied across industries, including healthcare, finance, cybersecurity, education, and enterprise software.

Why people are talking about it

Fine-tuning remains one of the most discussed AI topics among developers and startups building custom AI products.

On X, founders and engineers frequently debate whether it’s better to fine-tune models or rely on approaches like RAG and prompt engineering.

RLHF (Reinforcement Learning from Human Feedback)

What is RLHF?

RLHF stands for Reinforcement Learning from Human Feedback, a training method where humans evaluate and rank AI responses to help models learn better behavior.

Instead of learning only from raw internet data, models improve by receiving feedback on which answers are more useful, safer, more accurate, or better aligned with human preferences.

Why it matters

RLHF played a major role in making modern AI chatbots more conversational and user-friendly.

Without techniques like RLHF, many AI systems would produce far more confusing, toxic, or unhelpful outputs.

The method has become one of the key building blocks behind today’s consumer AI assistants.

Why people are talking about it

RLHF is frequently discussed on X during debates about AI alignment, censorship, bias, and model behavior.

Researchers and developers often compare RLHF with newer training approaches as companies compete to build AI systems that feel more natural, helpful, and trustworthy.

MoE (Mixture of Experts)

What is MoE in AI?

MoE, short for Mixture of Experts, is an AI architecture in which only certain parts of a model activate for specific tasks, rather than the entire system running at once.

You can think of it as a company that routes problems to specialized departments rather than having every employee handle every task.

Why it matters

MoE architectures help AI companies build larger and more efficient models without dramatically increasing computing costs.

This allows models to scale faster while using less energy and fewer resources during inference.

Why people are talking about it

MoE became a hot topic on X as developers started discussing how companies like xAI and other frontier AI labs use these architectures to improve efficiency and performance.

It is now one of the most closely watched concepts in the race to build bigger and smarter AI systems.

Shadow AI

What is Shadow AI?

Shadow AI refers to employees using AI tools without official company approval or oversight.

Examples include workers secretly using ChatGPT, Claude, or other AI assistants for writing, coding, data analysis, or internal work tasks.

Why it matters

Shadow AI has become a major concern for businesses because employees may unknowingly expose sensitive company data to external AI systems.

It also creates security, compliance, legal, and governance risks for organizations trying to control how AI is used internally.

Why people are talking about it

The term gained traction on X as companies realized employees were adopting AI tools much faster than corporate policies could keep up.

Many workers openly joke online that entire teams are already relying on AI tools behind management’s back.

Data Moat

What is a Data Moat?

A data moat refers to proprietary or unique data that gives a company a long-term competitive advantage in AI.

This could include customer interactions, workflows, enterprise data, industry-specific datasets, or user behavior collected over many years.

Why it matters

As AI models become increasingly accessible, many investors and founders believe proprietary data may become one of the biggest differentiators between companies.

The idea is simple: better data can lead to better AI products.

Why people are talking about it

“Data moat” has become one of the most common phrases in startup and venture capital discussions on X.

Investors frequently debate whether startups truly have defensible AI advantages or are simply building temporary wrappers around existing models.

AEO (Answer Engine Optimization)

What is AEO?

AEO stands for Answer Engine Optimization, the practice of optimizing content so AI systems can easily surface it in direct answers.

Unlike traditional SEO, which focuses on ranking links in search engines, AEO focuses on becoming part of the answer itself.

Why it matters

AI search tools are changing how people discover information online.

As users increasingly rely on AI-generated summaries rather than clicking through to websites, publishers and businesses are trying to adapt to a future in which visibility may matter more than traffic itself.

Why people are talking about it

AEO has become a major topic on X among marketers, publishers, founders, and SEO professionals preparing for AI-driven search behavior.

Many now believe the internet is entering a “zero-click” era in which AI assistants answer questions directly, without sending users to websites.

Context Engineering

What is Context Engineering?

Context engineering refers to designing the broader environment, instructions, memory, and system behavior that guide how AI systems operate across longer tasks and workflows.

It goes beyond simple prompting by shaping the overall structure surrounding the model.

Why it matters

As AI agents become more advanced, maintaining consistency across long conversations and multi-step workflows becomes increasingly important.

Context engineering helps AI systems stay aligned with goals, remember important information, and behave more reliably over time.

Why people are talking about it

Developers on X increasingly describe context engineering as the “next evolution” of prompt engineering.

The term has gained popularity as builders develop more sophisticated AI agents capable of extended reasoning, memory, and autonomous workflows.

ModelOps

What is ModelOps?

ModelOps, short for Model Operations, refers to the tools, workflows, and processes used to deploy, monitor, manage, and maintain AI models in production environments.

It is often compared to DevOps, but specifically for AI systems.

Why it matters

Building an AI model is only part of the challenge. Companies also need to keep models secure, reliable, updated, and cost-efficient after deployment.

ModelOps helps organizations monitor performance, detect failures, manage updates, and ensure that AI systems continue to work properly at scale.

Why people are talking about it

As more businesses move AI systems into real-world production, ModelOps has become an increasingly prominent topic across X among engineers, infrastructure teams, and enterprise software companies.

The term is especially common in discussions about safely and reliably scaling enterprise AI.

AI Governance

What is AI Governance?

AI governance refers to the rules, policies, oversight systems, and accountability frameworks used to manage how AI systems are developed and deployed.

This includes issues like transparency, bias, security, compliance, safety, and ethical use.

Why it matters

As AI systems become more powerful and widely adopted, governments and businesses are under pressure to ensure these systems are used responsibly.

AI governance is becoming especially important in industries like healthcare, finance, education, defense, and law.

Why people are talking about it

AI governance discussions exploded on X as regulators worldwide began introducing new AI laws and oversight frameworks.

Companies are also facing growing pressure from customers, investors, and policymakers to explain how their AI systems are trained and controlled.

Synthetic Data

What is Synthetic Data?

Synthetic data is artificially generated data created by AI rather than collected from real-world sources.

The data is designed to mimic real patterns and behaviors while avoiding many privacy and data-collection issues associated with actual user information.

Why it matters

Synthetic data is becoming increasingly important because high-quality real-world data is often expensive, limited, private, or difficult to obtain.

It is widely used in areas like robotics, autonomous driving, healthcare, cybersecurity, and enterprise AI training.

Why people are talking about it

Synthetic data became a major topic on X as AI companies searched for ways to keep training models without running into privacy restrictions or data shortages.

Many researchers believe synthetic data could become one of the key ingredients powering the next generation of AI systems.

In-Context Learning (ICL)

What is In-Context Learning?

In-context learning, often abbreviated as ICL, refers to an AI model’s ability to learn to perform a task simply by seeing examples within a prompt, without additional training.

For example, showing an AI several examples of a task can help it figure out the pattern and continue performing it correctly.

Why it matters

ICL is one of the reasons modern AI systems feel flexible and adaptable.

Instead of retraining models every time users want new behavior, AI can often learn directly from examples provided during a conversation or workflow.

Why people are talking about it

In-context learning is frequently discussed on X in conversations about reasoning models, long-context systems, and prompt design.

Developers often compare “zero-shot” and “few-shot” prompting techniques when testing how well AI models can generalize from examples.

Hyperautomation

What is Hyperautomation?

Hyperautomation refers to the large-scale automation of business processes through the integration of AI, workflows, robotics, software automation, and autonomous agents.

The goal is to automate as many repetitive and operational tasks as possible across an organization.

Why it matters

Many companies see hyperautomation as the next phase of digital transformation.

Businesses hope AI agents and automation systems can reduce costs, improve efficiency, speed up operations, and eliminate repetitive manual work across departments like customer service, finance, HR, and IT.

Why people are talking about it

Hyperautomation gained momentum on X as enterprises started experimenting with AI agents capable of handling increasingly complex workflows.

The phrase is now common in discussions about the future of work, enterprise productivity, and how AI may reshape white-collar jobs.

Bonus: 5 Emerging AI Terms Starting to Trend in 2026

World Models

What are World Models in AI?

World models are AI systems designed to build an internal understanding of how the world works, including physics, environments, causality, and human behavior.

Instead of simply predicting the next word, these systems attempt to simulate and reason about reality itself.

Why it matters

Many researchers believe world models could play a major role in the future of robotics, autonomous agents, self-driving vehicles, and advanced reasoning systems.

The idea is that AI becomes far more capable when it can predict outcomes and understand how actions affect the environment around it.

Why people are talking about it

World models have become increasingly popular on X as AI researchers push beyond chatbots toward systems capable of planning, simulation, and long-term reasoning.

The term is especially common in discussions around robotics, autonomous AI agents, and the path toward AGI.

Tool Invocation (Tool Calling / Function Calling)

What is Tool Invocation in AI?

Tool invocation, also known as tool calling or function calling, refers to an AI model’s ability to interact with external tools, APIs, software, or databases while performing tasks.

Instead of relying solely on its internal knowledge, the AI can actively use external systems to perform actions or retrieve information.

Why it matters

Tool invocation is one of the core technologies powering modern AI agents.

It allows AI systems to search the web, run code, book meetings, access databases, process payments, and interact with software platforms in real time.

Why people are talking about it

Developers on X frequently share demos that show AI agents autonomously calling multiple tools to complete complex workflows.

The concept has become central to discussions around agentic AI and the future of software automation.

Trusted AI

What is Trusted AI?

Trusted AI refers to AI systems designed to be transparent, explainable, reliable, safe, and fair.

The goal is to ensure AI systems behave predictably and can be trusted in high-stakes environments.

Why it matters

As AI moves deeper into healthcare, finance, education, law, and government, trust is becoming a major concern.

Businesses and regulators increasingly want AI systems that are explainable, auditable, and accountable instead of opaque “black boxes.”

Why people are talking about it

Trusted AI has become a growing topic on X as governments and enterprises demand stronger safeguards around AI deployment.

The phrase often appears in discussions involving regulation, AI safety, enterprise adoption, and public trust.

Promptslop

What is Promptslop?

Promptslop is an internet slang term for lazy, generic, low-effort AI-generated writing that feels repetitive, robotic, or overly polished.

It is often used mockingly to criticize content that clearly “sounds AI-generated.”

Why it matters

The rise of promptslop reflects growing frustration with the flood of generic AI-generated content spreading across the internet.

It also highlights a larger debate around authenticity, originality, and content quality in the AI era.

Why people are talking about it

The term spread quickly across X as users started calling out spammy LinkedIn posts, fake thought leadership threads, and low-quality AI-generated articles.

It became part meme, part criticism, and part warning about what happens when AI-generated content is published without human editing or insight.

AI Compliance

What is AI Compliance?

AI compliance refers to the process of ensuring that AI systems comply with laws, regulations, security standards, and industry policies.

This includes areas like privacy, transparency, data protection, risk management, and regulatory reporting.

Why it matters

As governments introduce new AI regulations around the world, companies are facing growing pressure to prove their AI systems are safe, secure, and legally compliant.

AI compliance is quickly becoming a major requirement for enterprises deploying AI at scale.

Why people are talking about it

AI compliance has become a major topic of discussion on X among legal experts, CISOs, founders, and enterprise software teams.

The rise of regulations such as the EU AI Act has pushed compliance from a niche legal issue to a mainstream business priority.

Closing

AI is moving so fast that understanding its language is becoming a new form of digital literacy.

Terms like “LLM,” “AI agents,” “hallucination,” and “context engineering” are no longer confined to research labs or Silicon Valley conversations. They are increasingly shaping how people work, build companies, search for information, create content, write software, and interact with technology every day.

Some of these terms may disappear as the AI industry evolves. Others could become foundational concepts that define the next generation of computing.

But one thing is already clear: AI is no longer just a niche technology trend. It is becoming part of the global economy, internet culture, enterprise software, education, media, and daily life.

And as AI continues to reshape the world, those who understand its language early may be better prepared for the changes to come.