DeepSeek launches V4 AI models to challenge OpenAI and Anthropic a year after breakthrough

DeepSeek is back, and it’s not easing into the spotlight. A year after its earlier model rattled Silicon Valley and forced a rethink on how much it really costs to build advanced AI, the Hangzhou-based startup has introduced a new flagship system aimed squarely at the biggest names in the field.

Announcing the launch on X, the company said, “DeepSeek-V4 Preview is officially live & open-sourced! Welcome to the era of cost-effective 1M context length.”

The company unveiled preview versions of its V4 series, positioning it as a serious contender against models from OpenAI and Anthropic.

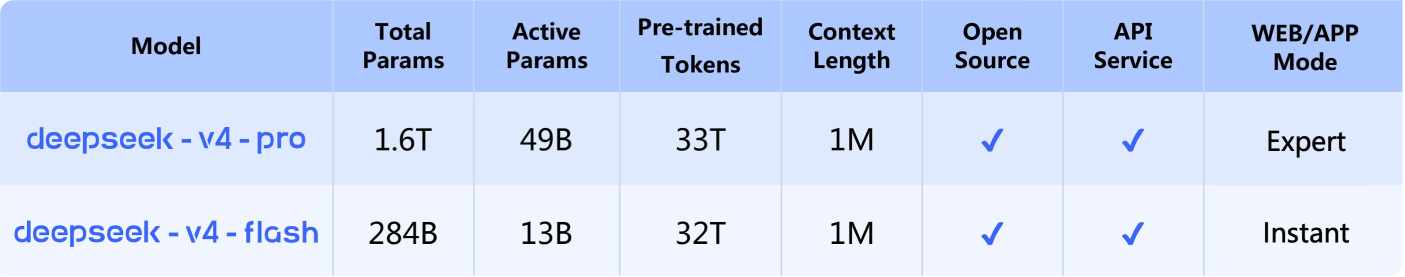

At the center of the release are two variants, V4 Flash and V4 Pro. DeepSeek says both push forward in coding benchmarks and show clear gains in reasoning and agent-style tasks. The improvements come from a mix of architectural changes and tighter optimization, with the company highlighting a new approach it calls Hybrid Attention Architecture. The idea is straightforward: help the model retain context over long conversations without losing track of earlier inputs.

That matters more than ever as developers shift from short prompts to complex workflows. DeepSeek says the V4 models can handle up to a 1 million-token context window, large enough to process entire codebases or long documents in a single prompt. That kind of scale signals a shift in how these systems are used, moving from isolated queries to sustained, multi-step tasks.

The launch lands at a time when cost has become just as important as raw performance. DeepSeek built its reputation on doing more with less, and V4 continues that approach. The system relies on a Mixture-of-Experts design, activating only a fraction of its total parameters for each task. Out of a trillion parameters, only about 37 billion are engaged at any given time. The result is lower inference costs without a major drop in output quality.

This balance between capability and efficiency is where DeepSeek is trying to carve out an edge. The company claims its new models outperform several leading systems on standard benchmarks, including OpenAI’s GPT-5.2, though it acknowledges it still trails the very latest models by a few months.

Even so, the message is clear. DeepSeek isn’t chasing dominance through brute force. It’s aiming to change the economics behind it.

That strategy is already influencing the broader market. When the company released its earlier R1 model, it triggered a sharp reaction across global tech stocks, raising doubts about whether the industry’s multi-billion-dollar spending spree on AI infrastructure was sustainable. Since then, investment has surged again, with U.S. tech giants expected to pour hundreds of billions into data centers and compute capacity in the coming years.

V4 enters that environment with a different pitch. It is built to run on more affordable infrastructure, and pricing is expected to fall further once new computing clusters come online. DeepSeek says those clusters will rely on Huawei’s Ascend 950 chips, scheduled to launch in the second half of the year.

For now, access to the top-tier V4 Pro model remains limited. The company points to a shortage of compute resources, a constraint that has become common across the industry as demand for high-performance models outpaces available hardware.

Inside DeepSeek V4: DeepSeek-V4-Pro and DeepSeek-V4-Flash

DeepSeek is splitting its flagship release into two distinct models, each aimed at a different kind of workload.

At the top end is DeepSeek-V4-Pro, a system built for heavy-duty tasks. It runs on a 1.6-trillion-parameter architecture, activating about 49 billion parameters per task. That puts it in the same conversation as leading closed-source models, at least based on early benchmarks.

Then there’s DeepSeek-V4-Flash, a lighter and more efficient option. It uses a 284-billion-parameter design with roughly 13 billion active parameters, making it far cheaper to run. The trade-off is clear: less raw capability, but faster responses and lower cost.

The split reflects a broader shift in how AI models are being deployed. Instead of a single system trying to handle everything, companies are starting to offer tiers that balance performance, speed, and cost depending on the use case.

The release is already rippling through markets in China. Shares of domestic chipmakers moved higher as investors bet that demand for local AI hardware will rise alongside models like V4. At the same time, rival model providers are feeling the pressure. Several companies have rushed out updates of their own in recent weeks, trying to keep pace.

DeepSeek’s rise has not gone unnoticed outside China. The company is in early talks with Tencent and Alibaba for its first funding round, a move that could further strengthen its position. Interest from major platform players hints at a deeper shift, where distribution and ecosystem control may matter as much as model performance.

With that, attention has come scrutiny. U.S. officials and tech leaders have raised concerns about how DeepSeek trained its models. One issue centers on distillation, a method where one AI system learns from the outputs of another. OpenAI and Anthropic have both suggested they detected such activity linked to DeepSeek. Another concern involves access to restricted hardware, including advanced Nvidia chips that are not supposed to be sold to Chinese firms.

DeepSeek has not directly addressed those claims in detail, though the questions continue to follow its rapid ascent.

For developers and businesses watching from the sidelines, the bigger takeaway may be less about any single benchmark and more about direction. DeepSeek is pushing a model that narrows the gap with leading systems while driving costs down. If that trend holds, it could reshape how companies decide which AI platforms to build on and how much they are willing to spend.

“Minimax and Zhipu as independent model providers will always be vulnerable to competition especially from internet platforms or cloud service providers which have better reach and distribution,” Vey-Sern Ling, managing director at Union Bancaire Privee, told Bloomberg. “Eventually the gap in model performance will be imperceptible to most users.”

The launch comes at a time when DeepSeek’s influence is starting to extend beyond model performance. The company is in early talks with Tencent Holdings and Alibaba Group Holding for its first funding round, with discussions pointing to a valuation north of $20 billion.

The interest signals how quickly the conversation is shifting. This is no longer just about who builds the best model. It’s about who controls the infrastructure, distribution, and long-term economics of AI.

That prediction points to a future where performance differences matter less than access, pricing, and integration. DeepSeek is betting it can win on those terms.

One year ago, it forced the industry to reconsider its assumptions. With V4, it is trying to do it again.