Anthropic accidentally leaked Claude Code source code via a map file in npm registry, revealing hidden “Capybara” models and AI pet

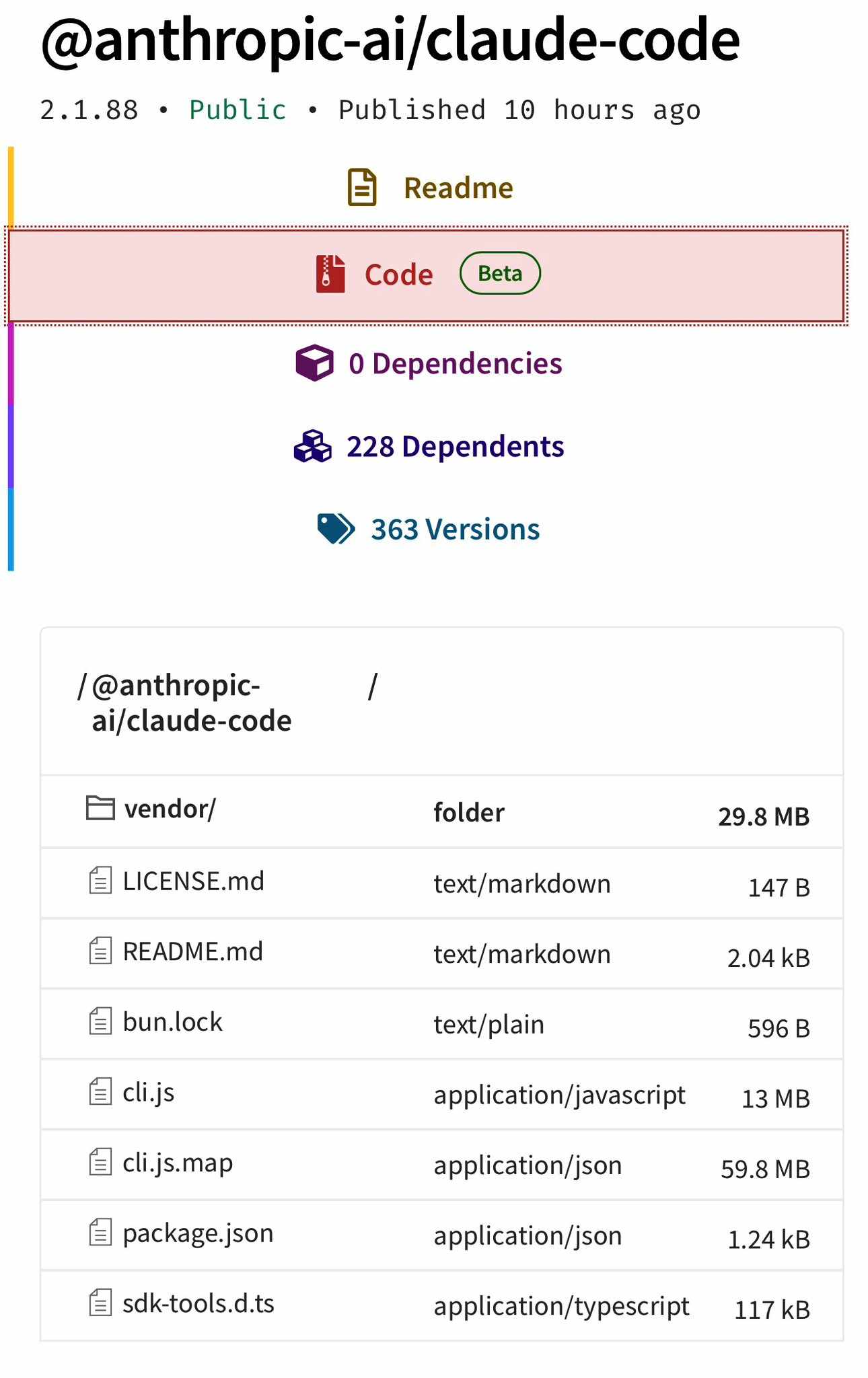

In what feels like the most on-brand slip of the year, Anthropic, the AI startup behind Claude, accidentally pushed its full Claude Code CLI source into the public npm registry—again. The latest incident, tied to version 2.1.88, left behind a 57 MB source map file that effectively handed developers a clean, readable version of the entire TypeScript codebase.

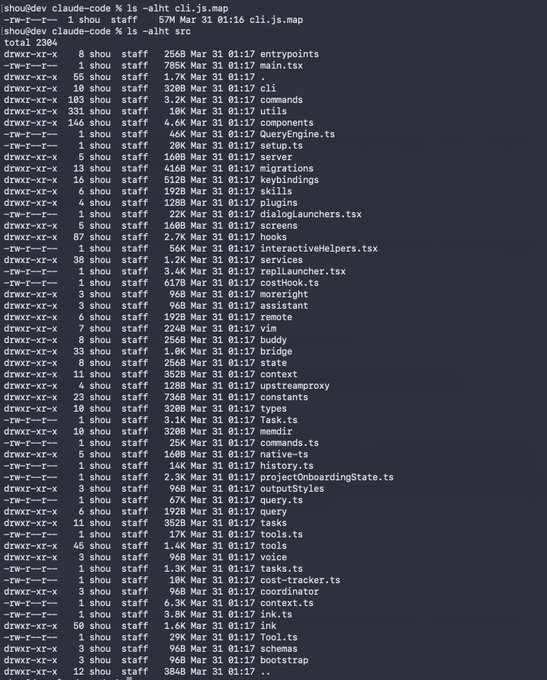

Within hours, the internet got to work. What emerged was a near-complete reconstruction of Claude Code’s internal structure: more than 2,300 files, including the main interface, agent runtime, tooling system, and pieces that had never been announced.

And yes, people noticed.

Claude Code source code leaked via a map file in their npm registry

Key Highlights

- Anthropic accidentally publishes Claude Code to npm

- a developer inspects the source map

- The entire codebase is exposed, fully readable

- plugins, tools, hooks, commands — all laid bare

- The inner workings of one of the most talked-about AI coding agents were revealed

- Anthropic remains silent

- Enterprise deals continue in the background

- The source map sits in the registry unnoticed

- No one caught it. again.

“Claude code source code has been leaked via a map file in their npm registry,” an X user shared.

Code: https://pub-aea8527898604c1bbb12468b1581d95e.r2.dev/src.zip

Claude code source code has been leaked via a map file in their npm registry!

Code: https://t.co/jBiMoOzt8G pic.twitter.com/rYo5hbvEj8

— Chaofan Shou (@Fried_rice) March 31, 2026

The reaction online landed somewhere between disbelief and amusement. One post summed up the mood:

“They forgot to add ‘make no mistakes’ to the system prompt.”

That line stuck. The memes followed quickly. And for longtime observers, there was a sense of déjà vu. A similar source map issue surfaced in early 2025. The packages disappeared quietly back then. This time, the spotlight is brighter.

To be clear, this isn’t a leak of Claude’s core intelligence. No model weights. No training data. What surfaced is the client-side layer—the CLI tool developers install and run locally. Still, it’s a detailed look at how Anthropic structures its agent workflows, tool-calling logic, and interface decisions.

And there’s plenty to look at.

What Was Actually Leaked (And What Wasn’t)

Before anyone panics: this is not the model weights. No secret sauce, no Claude LLM brain, no training data. It’s 100% the frontend/CLI JavaScript/TypeScript client — the terminal agent you npm install and run locally.

But it’s still a massive leak. The full production codebase includes:

- Complete agent logic, tool-calling system, and permission guardrails

- Experimental features that were never publicly announced

- The entire React/Ink UI layer

Community archaeologists have already unearthed some gems:

- A mysterious new model family codenamed “Capybara” with three tiers: capybara, capybara-fast, and capybara-fast[1m].

- Telemetry that literally tracks when you swear at Claude (frustration metric) and how often you type “continue” (because it keeps cutting itself off mid-response).

- A hidden /buddy command — the long-rumored Tamagotchi-style AI pet system (complete with species, rarity tiers, stats, hats, and animations).

- Hints of an April Fool’s feature that had everyone theorizing.

Developers combing through the code uncovered references to a new model family named “Capybara,” split into multiple tiers. There are traces of experimental features that never made it into public releases. One of the more unexpected finds is a hidden command that points to a Tamagotchi-style AI companion system, complete with traits, rarity levels, and visual elements.

Then there’s telemetry. The code shows that Claude Code tracks user behavior in ways that are more specific than many expected. It logs frustration signals, such as when users swear, and patterns, such as repeated “continue” prompts. That data appears to be routed through Datadog, along with session metadata such as model usage and environment details. The code includes safeguards to prevent the transmission of user code or file paths, and users can disable telemetry entirely via environment variables.

For many developers, the more serious takeaway isn’t the features—it’s the visibility into the system itself.

The permissions model is now out in the open. The logic behind tool approvals, file access, and execution boundaries can be read line by line. The full system prompt is also exposed, giving a clear picture of how Anthropic guides Claude’s behavior and enforces limits. For anyone interested in stress-testing or bypassing those controls, this is a detailed map.

There’s no sign that user data has been compromised. The infrastructure details revealed—session handling, authentication flows, feature flags—offer insight, not direct access. Still, this level of transparency changes the conversation around how these tools are secured in practice.

What makes this moment stand out isn’t just the mistake. It’s what happens next.

Developers have already started forking the code. Some are stripping it down. Others are experimenting with lighter, faster versions. There’s talk of community-built alternatives that take advantage of what’s now visible. That kind of momentum tends to move quickly.

Anthropic hasn’t issued a public response yet. The company will likely rotate exposed keys and tighten its release process. That part is routine. The harder question is how a slip like this happens more than once in a product used by developers who expect precision.

For now, the story is still unfolding. The code is out there. The forks are multiplying. And somewhere, someone is double-checking their build pipeline before the next publish goes live.

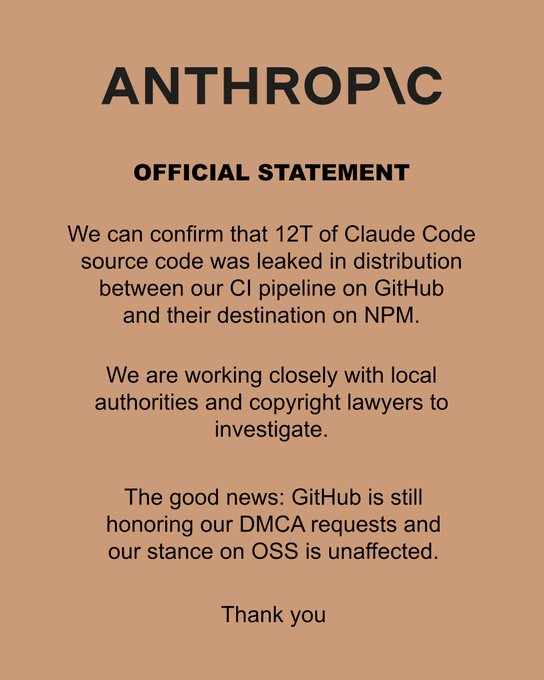

Update: Anthropic confirms leak, launches investigation

Anthropic has now acknowledged the incident, confirming that a significant portion of Claude Code’s source code was exposed during the distribution process from its internal CI pipeline on GitHub to its release on npm.

In a brief statement, the company said it is working with legal teams and local authorities to investigate how the code was published. Anthropic framed the issue as a breakdown in its release pipeline rather than a malicious breach.

The company added that GitHub is honoring its DMCA requests as it moves to contain the spread of the exposed code. It also emphasized that its broader open-source stance remains unchanged.

What stands out is the shift in tone. Earlier, the situation was unfolding in real time across developer communities, with no official response. Now, Anthropic is treating it as a formal incident, complete with legal involvement and takedown efforts.

Still, the core reality hasn’t changed. The code was public long enough for developers to replicate and distribute it widely. Even with takedowns in progress, copies are already circulating.

That raises a harder question for Anthropic: this wasn’t an external attack. It was a release failure. And those are often the ones that are hardest to explain.

OFFICIAL STATEMENT from Anthropic regarding the leak pic.twitter.com/pGArIZbYuH

— Theo – t3.gg (@theo) March 31, 2026