TrueAI Researchers Suggest Another Safety Layer for Autonomous AI Agents

For a while, the big worry around AI trading bots was easy to picture. They could read the market wrong. They could spit out bad analysis. They could tell users to make a move they should never make.

Now a new risk is coming into view. Some agentic systems are getting close enough to execution that a bad prompt, a compromised skill, or a sloppy chain of actions can turn into a real trade. Once that happens, the downside lands in an account, not in a chat window.

That is the setup behind a new research paper from researchers at TrueAI and Inc4.net. The paper introduces “Survivability-Aware Execution,” or SAE, a control layer that sits between an agent and the exchange. Before an order goes through, SAE checks the request against hard limits on exposure, leverage, slippage, and rate limits, as well as against approved tools or venues. When a request fails those checks, it gets blocked.

The timing fits what True is already building. TrueAI describes itself as the AI infrastructure company and LLM engine behind True Trading, a perpetual DEX on Solana that serves as a trading assistant for market analysis and portfolio management. That background helps explain why TrueAI’s researchers are focusing on execution risk in autonomous trading systems.

What Is “Survivability-Aware Execution”?

Survivability-Aware Execution, or SAE, is a proposed control layer that sits between an AI agent and the exchange. Before an order goes through, SAE checks the request against predefined safety and risk rules, including exposure limits, leverage caps, slippage thresholds, rate limits, and approved tools or venues.

If the request breaks those rules, it gets blocked. The goal is to prevent bad prompts, compromised skills, or faulty agent logic from resulting in real trades and losses.

Put simply, SAE works like a final checkpoint. A model can suggest a trade, and a third-party skill can help assemble the request, but the order still has to clear one more gate before it reaches the market. That gate sits at the last mile, where an idea turns into an executed trade.

Why Now?

The paper lands at a time when AI agents are moving closer to real execution. Instead of stopping at analysis or trade ideas, newer systems can connect to tools, install skills, and interact directly with trading infrastructure.

That raises the stakes fast: A bad chain of logic, an unsafe third-party skill, or a manipulated prompt can lead to oversized positions, reckless leverage, or trades the user never intended to place.

SAE is the researchers’ answer to that problem: a final control layer designed to step in before autonomous systems can cause real-money damage.

The paper argues that once agents can act, execution becomes the part that needs the strongest guardrails.

The authors describe this risk as “execution-induced loss,” in which untrusted prompts, manipulated narratives, or compromised tools can trigger trades with real financial consequences.

That concern grows as agent ecosystems get easier to extend. The paper points to skill marketplaces such as skills.sh, where new capabilities can be integrated into agent workflows.

That makes useful tools easier to distribute, while also creating more places where things can go wrong. Every added skill becomes another part of the chain that needs scrutiny.

The paper also introduces a useful term called the “Delegation Gap.” That is the distance between what a system was meant to do and what the agent actually tried to do. In a world full of tool calls, installed skills, and automated execution, that gap can get expensive fast. A system does not need to go fully off the rails to cause damage, it only needs enough room to take the wrong action once.

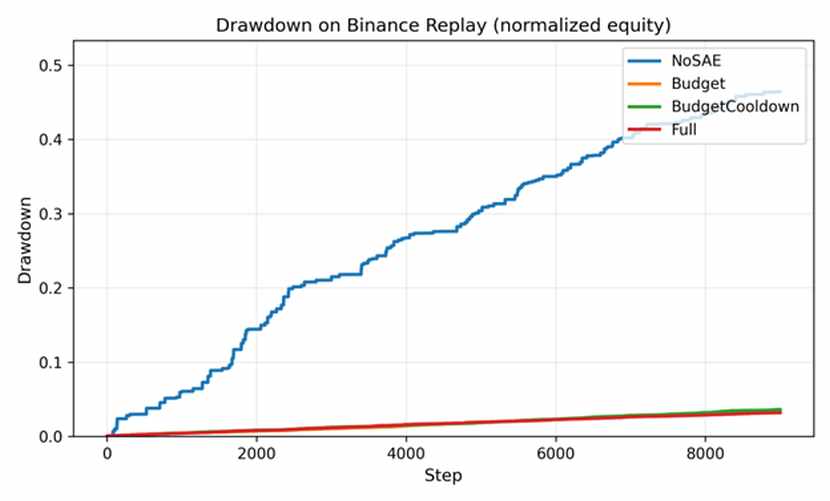

The early results are one reason this paper is worth paying attention to. Using an offline replay built on official Binance USD-M BTCUSDT and ETHUSDT perpetual data from September 1 through December 1, 2025, the authors say the full SAE setup cut maximum drawdown from 0.4643 to 0.0319.

They also report that the Delegation Gap loss proxy fell from 0.647 to 0.019, while AttackSuccess dropped from 1.00 to 0.728. In that run, FalseBlock stayed at zero.

Those figures are striking, though they come with an obvious caveat: This was an offline replay using a simulator instead of a live production environment, and the authors say as much.

The paper lists limits around replay fidelity, exchange realism, adaptive adversaries, supply-chain realism, trust calibration, and performance across different market regimes. Taken together, the paper makes a serious case for treating execution controls as their own layer

That is where this gets more interesting. The paper focuses on crypto trading, but the underlying issue extends much further. Once agents can take actions in the real world, whether that means sending payments, changing cloud settings, running procurement steps, or moving money between systems, the same question keeps coming back: who or what gets the final say before the action happens? The paper explicitly identifies payments, cloud operations, and procurement as future areas where this kind of execution guard could be applied.

Plenty of startups are racing to make agents more capable. Far fewer are talking about what should happen right before those agents act. True Trading’s paper argues that this checkpoint deserves more attention, especially as installable skills and tool-enabled agents become more common.

It remains to be seen how far True Trading’s paper will go as a formal standard. However, it does put its finger on a problem that is getting harder to ignore. As agents get closer to execution, the key question becomes how much freedom they should have once real actions are on the table.

If that is where the next fight over agent safety lands, True Trading has at least given it a name and an early blueprint.