Mercor confirms breach in LiteLLM supply-chain attack, exposing 4TB of candidate data and source code

Mercor has confirmed it was caught in a supply-chain attack tied to LiteLLM—an incident that may have exposed up to 4TB of sensitive data, including candidate records, internal code, and identity documents. The disclosure comes days after reports surfaced that the AI hiring startup had been breached, with a well-known hacking group claiming responsibility.

On Tuesday, TechStartups reported that Mercor, a fast-growing AI recruiting and data-labeling company valued at $10 billion, had been hacked after the group LAPSUS$ said it pulled massive amounts of data from the company’s systems through a Tailscale VPN. At the time, the scale of the breach raised questions. Three days later, Mercor has now confirmed it was affected by a broader compromise tied to LiteLLM, a widely used developer tool in AI workflows.

The company said the incident may have exposed customer and user data and that it was one of many organizations impacted by the same attack chain. Mercor works with clients including OpenAI, Anthropic, and Meta—a detail that adds weight to the breach and its potential downstream effects.

“The privacy and security of our customers and contractors is foundational to everything we do at Mercor. We recently identified that we were one of thousands of companies impacted by a supply chain attack involving LiteLLM.

Our security team moved promptly to contain and remediate the incident. We are conducting a thorough investigation supported by leading third-party forensics experts. We will continue to communicate with our customers and contractors directly as appropriate and devote the resources necessary to resolving the matter as soon as possible,” Mercor said in a post on X.

The privacy and security of our customers and contractors is foundational to everything we do at Mercor. We recently identified that we were one of thousands of companies impacted by a supply chain attack involving LiteLLM.

Our security team moved promptly to contain and…

— Mercor (@mercor_ai) March 31, 2026

The earlier claims from LAPSUS$ painted a far more detailed picture of what may have been taken.

Mercor hacked: What may have been exposed

Based on claims circulating online, the breach appears to span multiple layers of Mercor’s infrastructure—from user data to core systems.

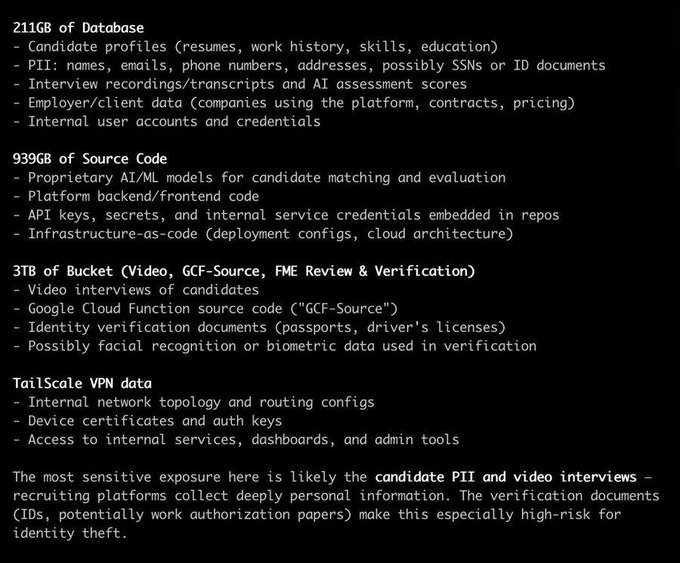

At the database level, attackers claim access to around 211GB of records, including candidate profiles, resumes, work history, and contact details. The dataset may include highly sensitive personal information, such as names, email addresses, phone numbers, addresses, and potentially government-issued identification. Interview transcripts, recordings, and AI-generated evaluation scores were reportedly part of the same pool, along with employer and client data tied to companies using the platform.

The exposure of source code is just as significant. Roughly 939GB of internal repositories were allegedly accessed, including proprietary AI models used for candidate matching, full platform code, and embedded credentials such as API keys and service tokens. Infrastructure configurations tied to cloud deployments may have been included as well.

The largest portion of the breach—close to 3TB—appears to come from storage buckets containing video interviews, identity-verification documents such as passports and driver’s licenses, and internal review systems. Some of this data may involve biometric signals such as facial and voice data.

Attackers also claim to have access to Mercor’s Tailscale VPN environment, which could expose the internal network structure, authentication keys, and administrative systems.

Reports circulating online suggest the total volume of data accessed may reach roughly 4 terabytes.

If those claims hold, the breach reaches into the most sensitive parts of Mercor’s platform—where personal identity, career history, and biometric data intersect. Recruiting systems handle deeply personal information, and exposure at this level poses serious risks, including identity theft and long-term misuse of biometric data.

Access to Mercor’s Tailscale VPN could have given attackers a direct line into internal systems, turning a single entry point into broad system-level access.

The attackers have claimed the breach followed failed ransom negotiations and have hinted at selling or releasing the data. Mercor has not confirmed those details.

What stands out here is not just the scale of the data involved, but the path the attackers took. This was not a direct hit on Mercor’s own codebase. It appears to have come through a dependency—LiteLLM—used across AI development stacks. That kind of indirect entry point is becoming a recurring pattern.

AI startups are moving fast, often relying on open-source libraries and third-party connectors to ship products quickly. That speed creates hidden risk. A single compromised dependency can ripple across thousands of companies at once, turning a small weakness into a broad attack surface.

This incident exposes a deeper shift in the AI ecosystem. The risk is no longer just flawed code—it’s the dependencies behind it.

As startups race to ship faster, shared tooling is quietly becoming a single point of failure. In this environment, security isn’t a feature. It’s becoming the dividing line between companies that scale—and those that get exposed.