LAPSUS$ claims massive breach of AI hiring startup Mercor, says 4TB of data taken via Tailscale VPN

A cybercrime group with a track record of hitting some of tech’s biggest names says it has struck again—this time going after one of the fastest-growing companies in the AI talent pipeline.

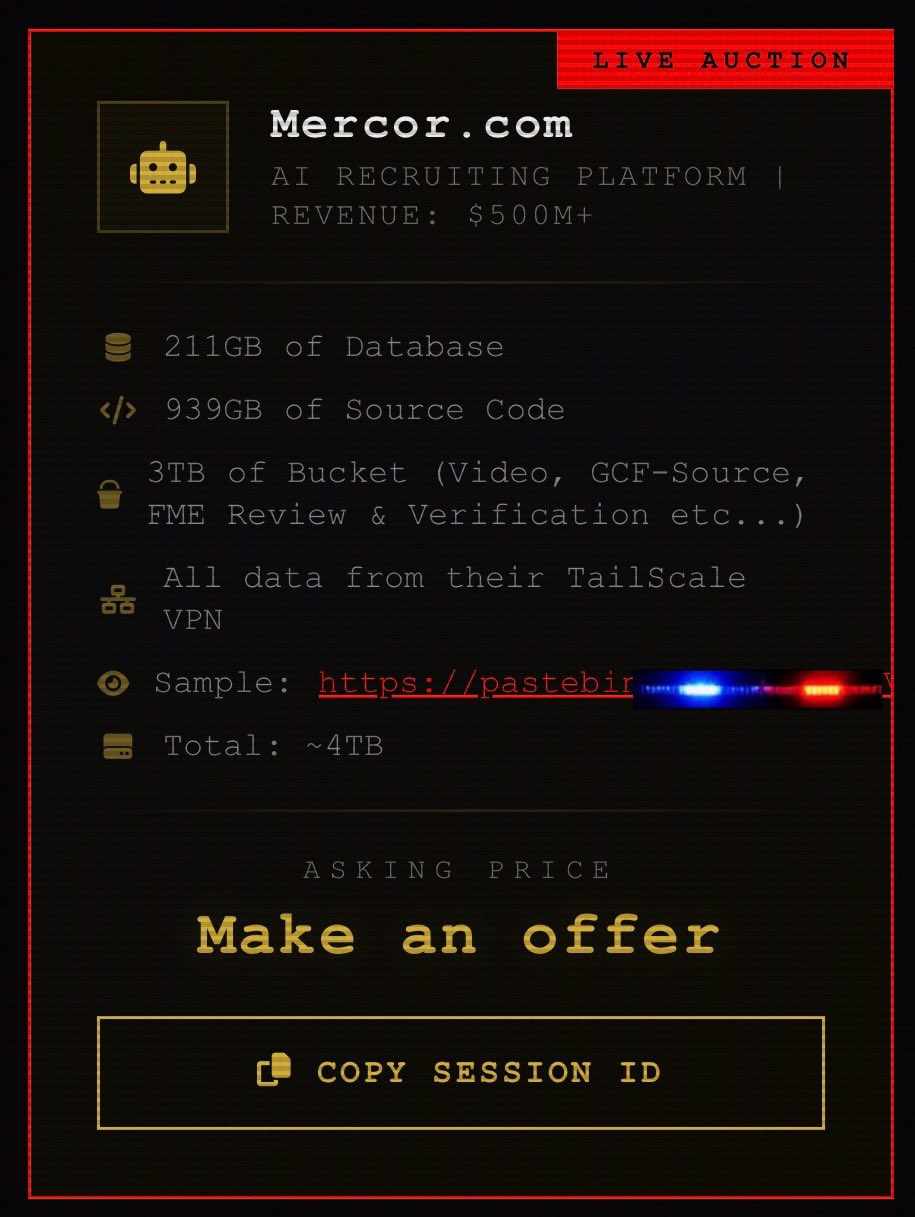

Posts circulating across X and Reddit point to a claim by LAPSUS$ that it breached Mercor, an AI hiring and evaluation startup, and pulled out roughly 4 terabytes of internal data. The group says the haul includes source code, candidate databases, and thousands of recorded interviews tied to identity verification.

Mercor AI allegedly breached by Lapsus

The numbers, if accurate, are staggering. LAPSUS$ claims it accessed 939GB of source code, 211GB of database records containing resumes and personal data, and nearly 3TB of stored files. That storage reportedly includes video interviews containing face and voice data, along with KYC documents and passports. The group also says it obtained full access to Mercor’s TailScale VPN environment, which could have given it access to much of the company’s internal systems.

According to the posts, the breach followed failed ransom negotiations. The attackers now say they may sell or release the data.

“Mercor AI has allegedly been breached by Lapsus

939GB of source code

4TB of data in total

All data from their TailScale VPN,” Cybersecurity Analyst and Security Researcher Dominic Alvieri said in a post on X.

Mercor AI has allegedly been breached by Lapsus

939GB of source code

4TB of data in total

All data from their TailScale VPN@mercor_ai pic.twitter.com/Gpo5V7WD3Z— Dominic Alvieri (@AlvieriD) March 31, 2026

A high-growth AI startup with high-value data

Mercor has quickly become a key player in the AI ecosystem. Founded in 2023 by 22-year-old college dropouts Brendan Foody, Surya Midha, and Adarsh Hiremath, the San Francisco startup shifted from freelance matching into a core role supporting AI model training and evaluation. TechStartups featured Mercor late last year after the AI hiring startup raised $350 million at a $10 billion valuation.

The company connects domain experts—ranging from doctors to engineers—with leading AI labs. Those experts review model outputs, test systems, and participate in structured interviews. Much of that process is recorded, verified, and stored, creating a large repository of sensitive material.

Mercor says it has scaled quickly, with tens of thousands of experts on its platform and a reported annual revenue run rate in the hundreds of millions. That growth has made it a valuable partner to AI labs—and a high-value target for attackers.

The nature of its data makes the stakes even higher. Video interviews, identity documents, and detailed professional profiles are used alongside proprietary systems to match and evaluate talent. For attackers, that mix offers multiple paths to profit, from identity fraud to corporate espionage.

Mercor AI Founders

How the breach may have happened

The initial entry point remains unconfirmed. Posts tied to the incident suggest a developer may have exposed production credentials through an AI coding assistant linked to Anthropic. Incidents like this have started to surface more often as developers rely on AI tools in day-to-day workflows.

Once inside, the attackers reportedly used Tailscale access to move through internal systems and extract data. Tools like Tailscale are widely used and considered secure, but access depends heavily on configuration and credential hygiene. One exposed key can create a path across an entire network.

What’s at risk

If the claims hold, the fallout could stretch far beyond a single company.

For individuals, the exposure of video interviews and identity documents raises concerns that go well past typical data breaches. Face and voice data can be used to generate deepfakes, and unlike passwords, biometrics can’t be reset.

For Mercor, the loss of source code and internal systems could undermine trust with customers, especially AI labs that depend on the platform for sensitive evaluation work. It may also trigger scrutiny under privacy regulations such as GDPR and CCPA.

For the AI industry, the implications are broader. Mercor sits close to the workflows for testing and refining advanced models. Any leak tied to those processes could offer insight into how leading systems are built and evaluated.

Silence so far

As of publication, Mercor has not issued a public statement. Its website and social channels show no reference to the incident, and there has been no formal disclosure to users.

LAPSUS$, meanwhile, has used public leaks to pressure targets in the past. The group first drew attention in 2022 after breaching companies including Microsoft and Nvidia. More recently, activity linked to similar groups has targeted enterprise platforms in large-scale extortion campaigns.

A growing pattern in AI security

The claim lands at a time when AI startups are scaling at breakneck speed. Hiring, infrastructure, and product development often move ahead faster than security practices can keep up. That gap is becoming a clear opportunity for attackers.

Credential leaks, internal tooling exposure, and third-party integrations are now common weak points. As companies rely more on AI-assisted development, the line between productivity and risk is starting to blur.

This story is still developing. Users who have completed interviews or submitted identity documents through Mercor may want to keep a close eye on financial and online accounts, and consider adding fraud alerts or credit monitoring as a precaution.

Note: This report is based on publicly circulating claims by LAPSUS$ and information about Mercor’s operations. The breach has not been independently verified.